Porter Standard brings the experience of a PaaS to your own cloud account. You get the flexibility of running your own enterprise-grade infrastructure in your own VPC, without actually worrying about how it's provisioned or managed - you just have to bring your GitHub repository.

While there are many AWS services available to deploy FastAPI applications, from Elastic Beanstalk to serverless offerings like AWS Lambda and ECS Fargate, Porter gives you the benefits of EKS, like scalability and availability, without having to manage DevOps (you don't have to worry about IaC/Infrastructure as Code or use the AWS Cloud Development Kit) or know anything about EKS at all. Porter and the EC2s the platform spins up are a comparable cost to Fargate, without any of the issues associated with serverless, like cold starts.

In this guide, we're going to walk through using Porter to provision infrastructure in an AWS account, and then deploy a basic FastAPI application and have it up and running.

Note that to follow this guide, you'll need an account on Porter Standard along with an AWS account. While Porter itself, doesn't have a free tier, if you're a startup that has raised less than 5M in funding, you can apply for the Porter Startup Deal. Combined with AWS credits, you can essentially run your infrastructure for free. We also offer a two-week long free trial!

What We're Deploying

FastAPI is a relatively new API framework for Python apps, which is built from the ground up for performance and reliability. The interesting thing about FastAPI is that it supports async functions natively(so you get the best of asynchronous programming, but with Python), and it is built on Pythonic type hints - which helps you ship faster without getting bogged down by the intricacies of dynamic types. If you’re looking at quickly rolling out your app and have some familiarity with Python, FastAPI is a great way to quickly get started with rolling out your backend components.

We're going to deploy a sample FastAPI server - but that doesn't mean you're restricted to FastAPI. You're free to use any other Python web framework. This app's a simple application with a single endpoint - / to demonstrate how you can push out a public-facing app on Porter with a public facing domain and TLS. The idea here is to show you how a basic app can be quickly deployed onto an AWS EC2 instance using Porter, allowing you to then use the same flow for deploying your Python code.

You can find the repository for this sample app here: https://github.com/porter-dev/fastapi-getting-started. Feel free to fork/clone it, or bring your own.

Getting started

Deploying web applications from a Github repository on Porter using AWS involves - broadly - the following steps:

- Provisioning infrastructure inside your AWS account using Porter.

- Creating a new app on Porter where you specify the repository, the branch, any build settings, as well as what you'd like to run.

- Building your app and deploying it onto an AWS EC2 instance (automatically handled by Porter).

Connecting your AWS account

On the Porter dashboard, head to Infrastructure and select AWS.

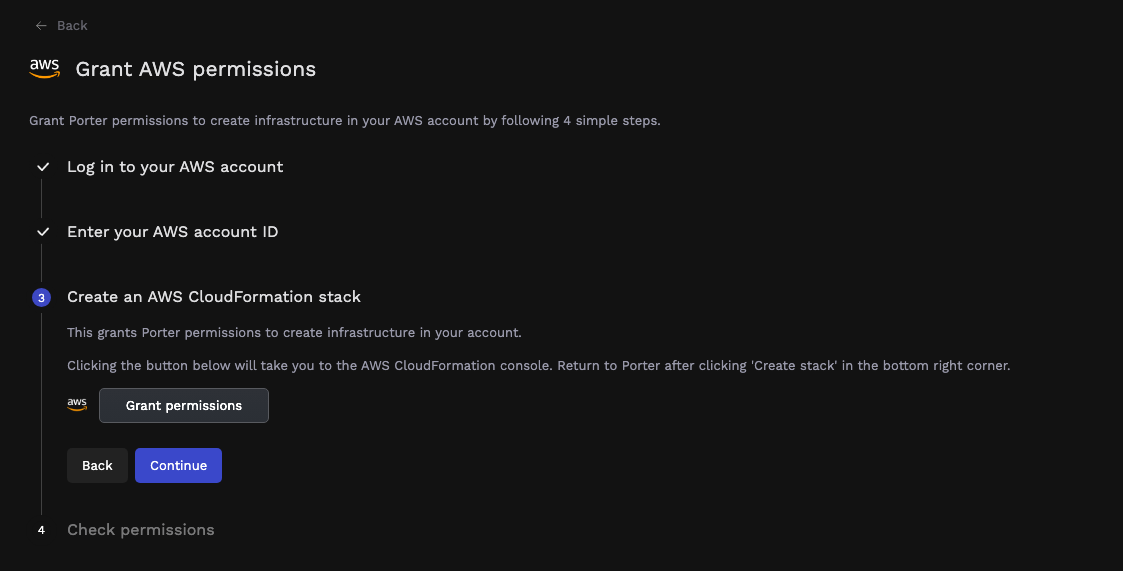

Here you're required to log into your AWS account and provide your AWS account ID to Porter. Clicking on Grant permissions opens your AWS account and takes you to AWS CloudFormation, where you need to authorize Porter to provision a CloudFormation stack; this stack's responsible for provisioning all IAM roles and policies needed by Porter to provision and manage your infrastructure. Once the stack has been deployed, it takes a few minutes to complete:

Once the CloudFormation stack's created on AWS, you can switch back to the Porter tab, where you should see a message about your AWS account being accessible by Porter:

Provisioning infrastructure

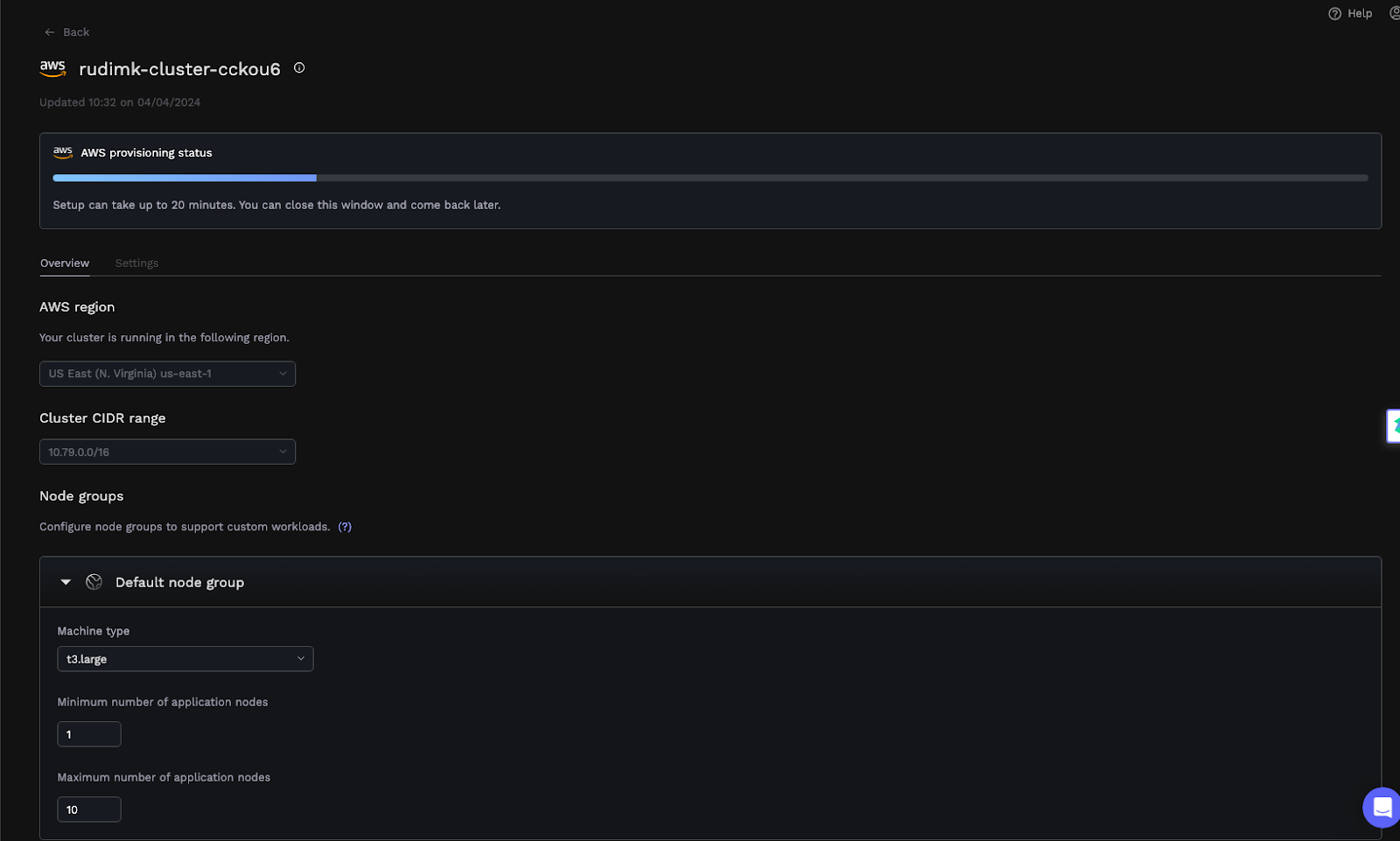

After connecting your AWS account to Porter, you'll see a screen with a form - the fields are pre-filled, with details about your cluster:

Ordinarily, the only two fields that should be tweaked are the cluster name and the region - feel free to change those. The other fields are usually changed if Porter detects a conflict between the proposed cluster's VPC and other VPCs in your account - these would be flagged during a preflight test, allowing you the option of tweaking those addresses. You can also choose a different instance type in this section for your cluster; while we tend to default to t3.medium instances, we support many other AWS EC2 instance types. Once you're satisfied, click Deploy.

At this stage, Porter will run preflight checks to ensure your AWS account has enough quotas free for components like vCPUs, elastic IPs as well as any potential conflicts with address spaces belonging to other VPCs. If any issues are detected, these will be flagged on the dashboard along with troubleshooting steps.

Deploying your EKS cluster can take up to 20 minutes, and Porter will also have access to your ECR repository. After this process, you won't really need to enter the AWS Management Console again to manage your applications.

Creating an App and Connecting Your GitHub Repository

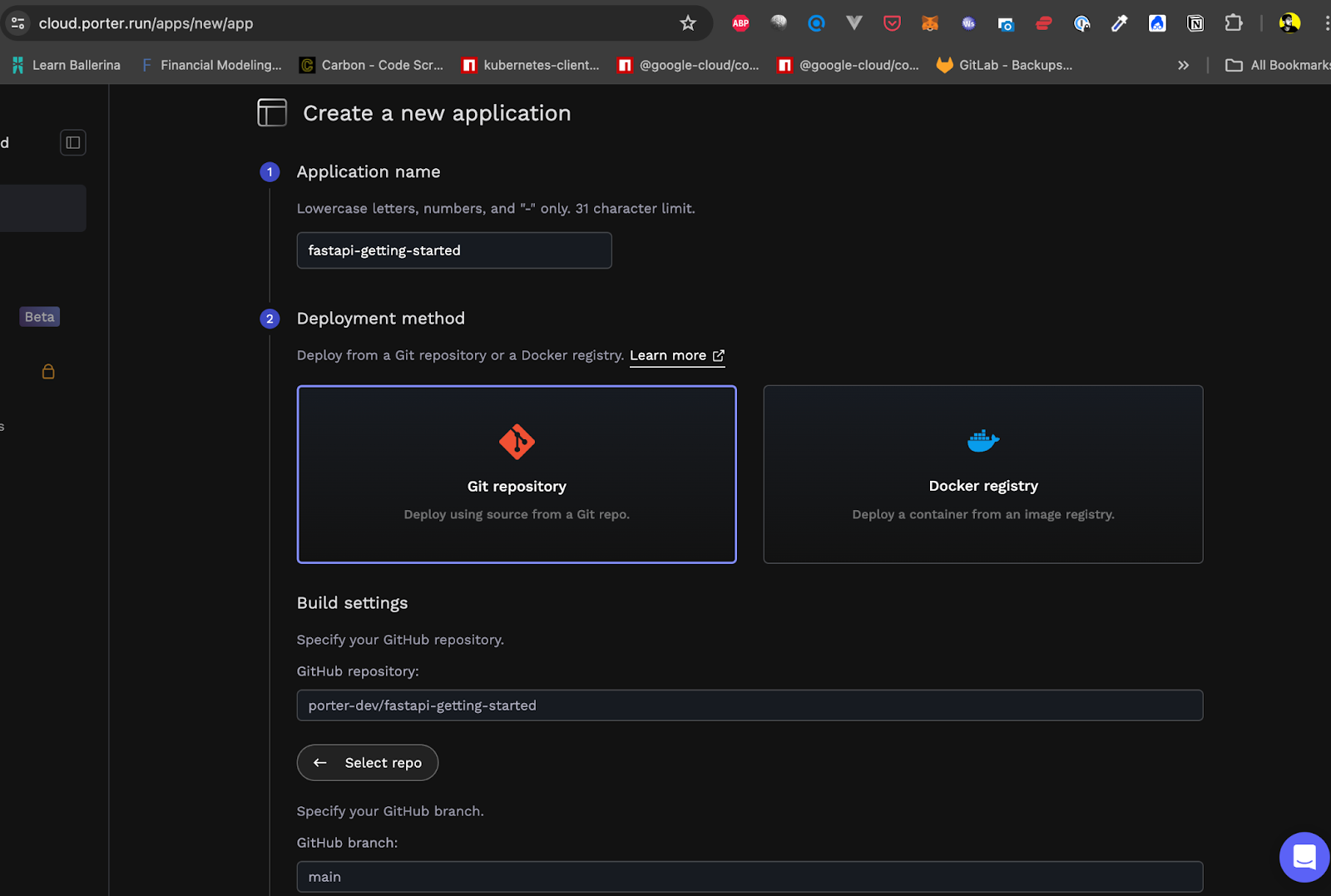

On the Porter dashboard, select Create a new application, which opens the following screen:

This is where you select a name for your app and connect a GitHub repository containing your code. Once you've selected the appropriate repository, select the branch you'd like to deploy to Porter. You can also deploy from a Docker registry.

If you already have your own CI/CD pipeline and maintain your own artifacts, like a Docker image, then the onus of setting up CI/CD is on you - if you’re looking for a more traditional, PaaS-like flow, you can just choose the repository you want to deploy and Porter will take care of CI/CD for you, using Github actions under the hood.

Note

If you signed up for Porter using an email address instead of a Github account, you can easily connect your Github account to Porter by clicking on the profile icon on the top right corner of the dashboard, selecting Account settings, and adding your Github account.

Configuring Build Settings

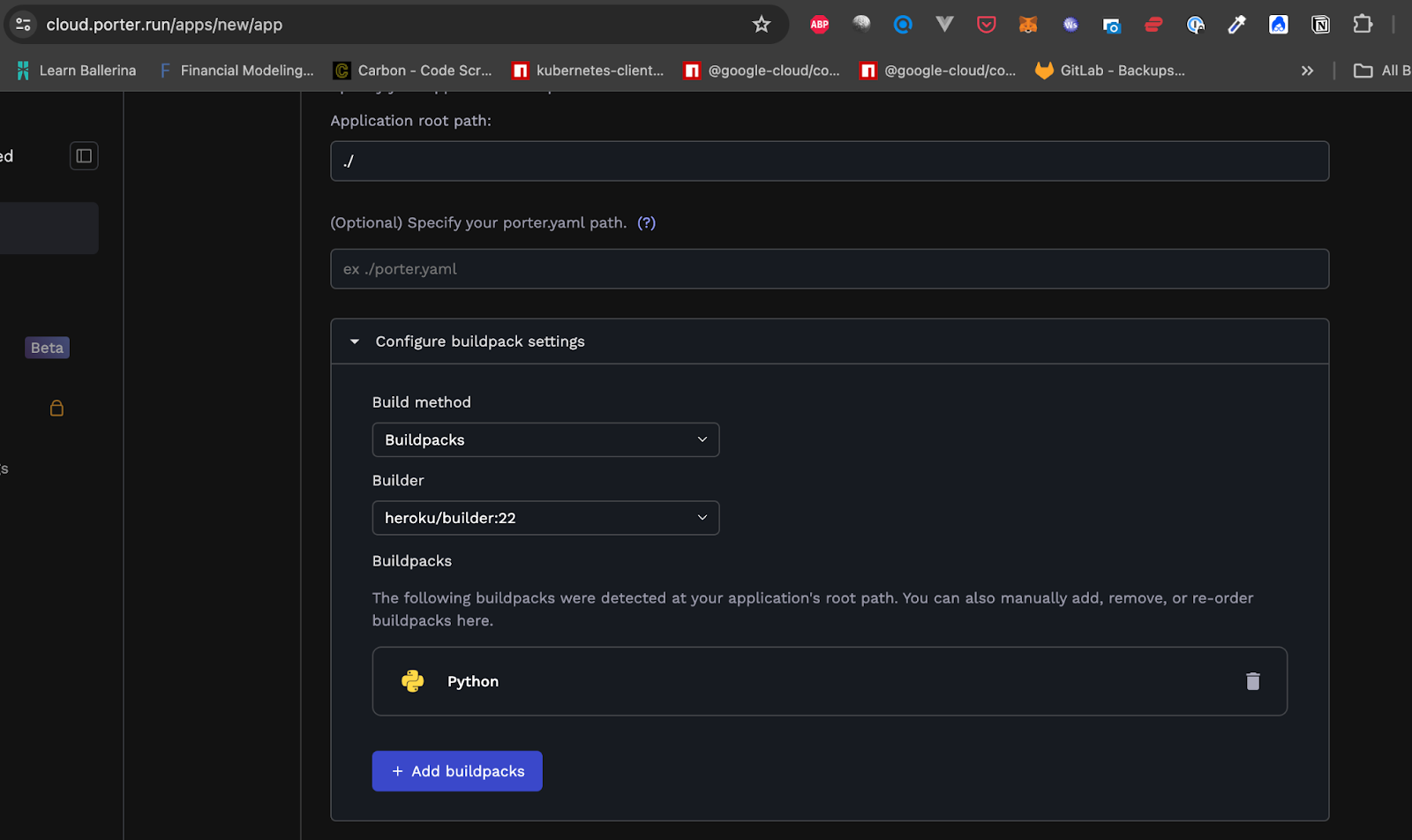

Porter has the ability to automatically detect what language your app is written in and select an appropriate buildpack that can be used to package your app for eventual deployment automatically. Once you've selected the branch you wish to use, Porter will display the following screen:

You can further tune your build here. For instance, we're going to use the newer Heroku/builder:22 buildpack for our simple application.

Configure Services

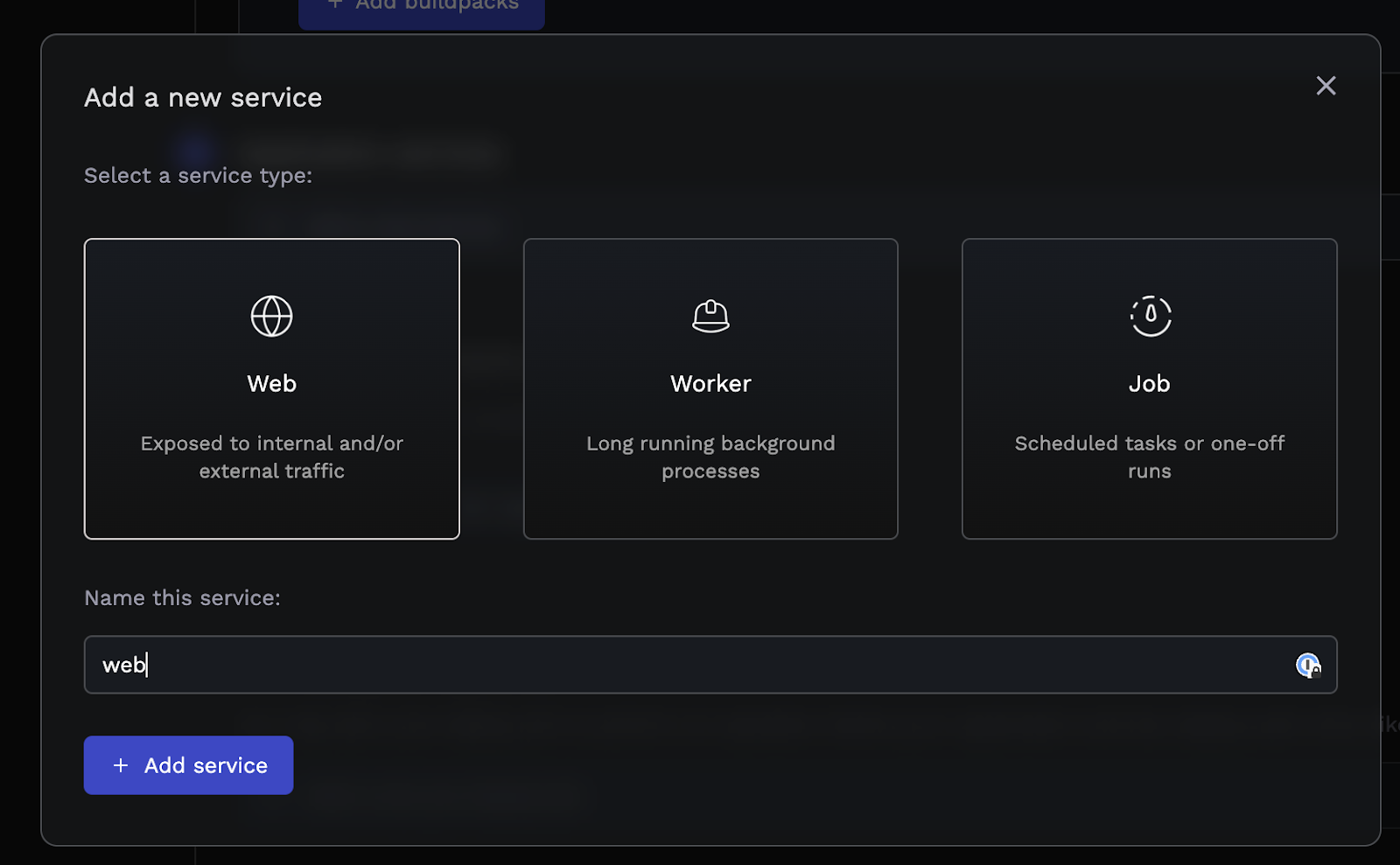

At this point, taking a quick look at applications and services is a good idea. An application on Porter is a group of services where each service shares the same build and the same environment variables. If your app consists of a single repository with separate modules for, say, an API, a frontend, and a background worker, then you'd deploy a single application on Porter with three separate services. Porter supports three kinds of services: web, worker, and job services.

Let's add a single web service for our app.

Configure Your Service

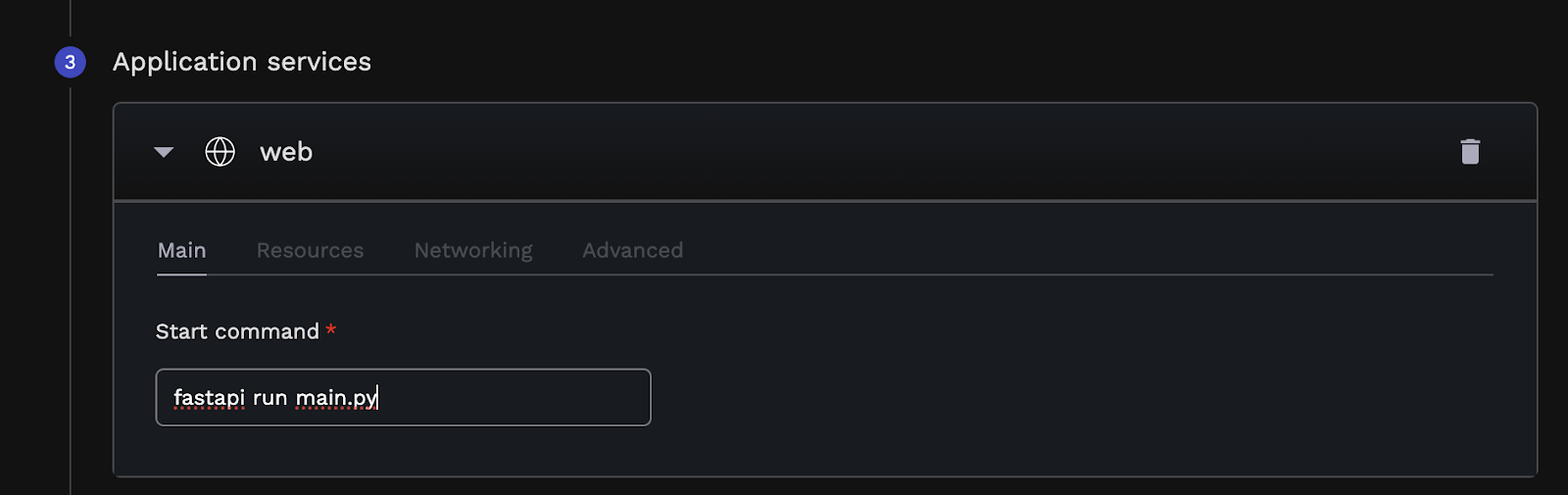

Now that we've defined a single web service, it's time to tell Porter how it runs. That means specifying what command to run for this service, what CPU/RAM levels to allocate, and how it will be accessed publicly.

You can define what command you'd like Porter to use to run your app in the Main tab. This is required if your app's being built using a buildpack; this may be optional if you opt to use a Dockerfile (since Porter will assume you have an ENTRYPOINT in your Dockerfile and use that if it exists).

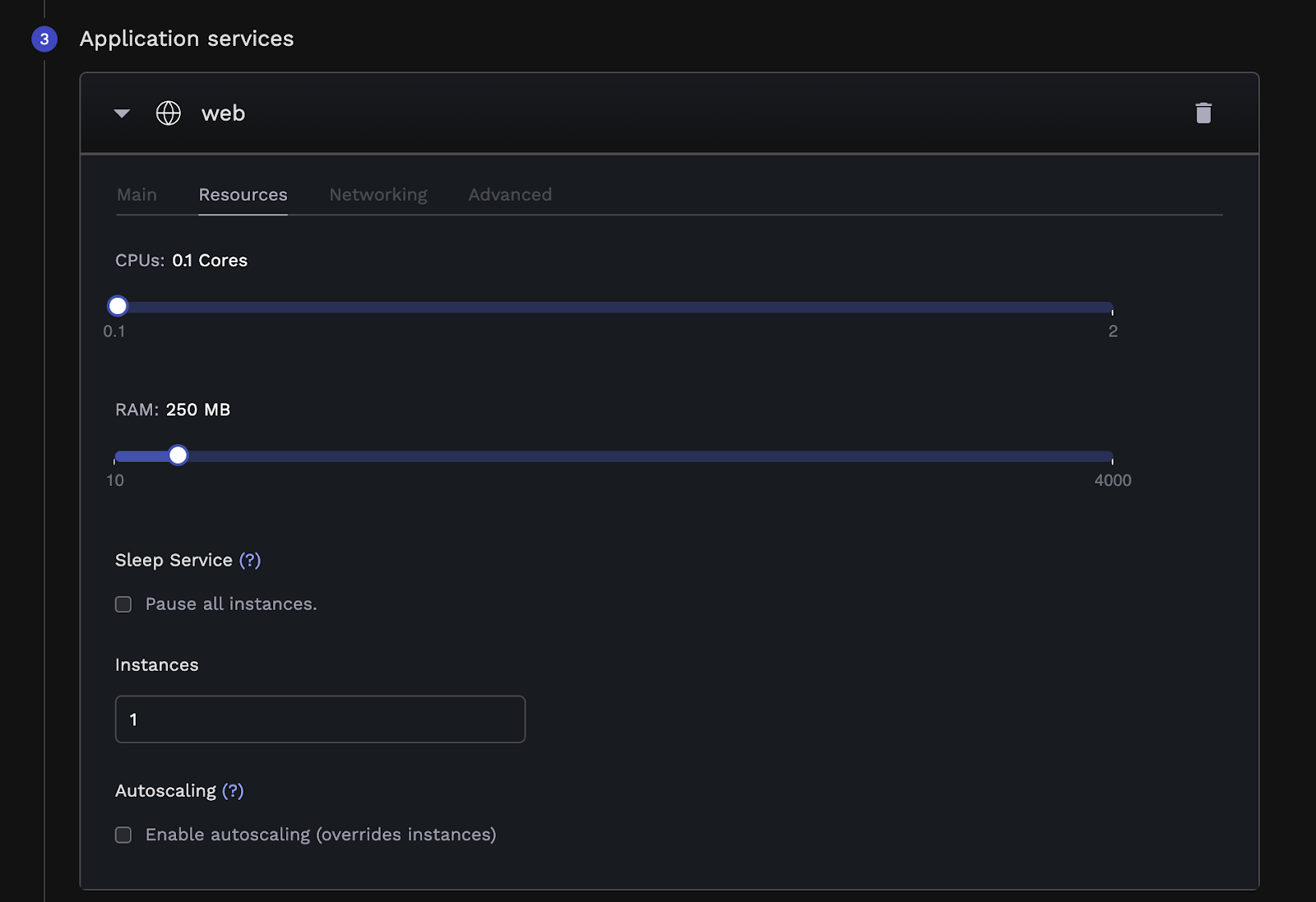

The Resources tab allows you to define how much CPU and RAM your app's allowed to access. Porter imposes a limit on the resources that can be used by a single app; if your app needs more, it might make sense to look at Porter Standard instead, which allows you to bring your own infrastructure and have more flexibility in terms of resource limits.

In this section, you can also define the number of replicas you'd like to run for this app and any autoscaling rules—these allow you to instruct Porter to add more replicas if resource utilization crosses a certain threshold.

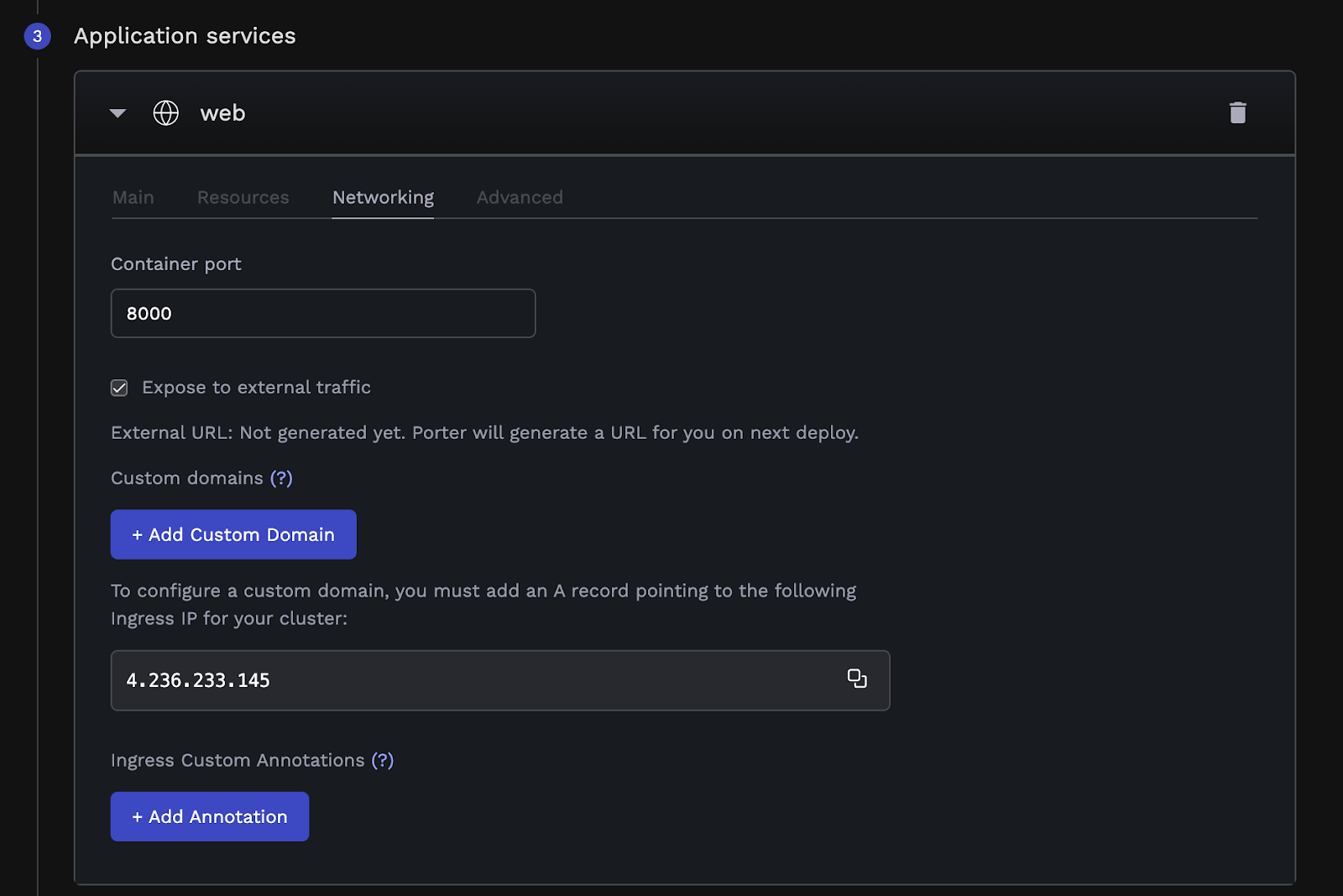

The Networking tab is where you specify what port your app listens on - since we’ve kept FastAPI’s default settings, we’re going to set the port to 8000. When you deploy a web app on Porter, we automatically generate a public URL for you to use - but you can also opt to bring your own domain by adding an A record to your DNS (Domain Name Service) records, pointing your domain at Porter's public load balancer, and adding the custom domain in this section. This can be done at any point - either while you're creating the app or later once you've deployed it.

Porter’s default setup uses a network load balancer, but it also natively supports switching to an application load balancer if you wanted to use a WAF, for example.

Note

If your app listens on localhost or 127.0.0.1, Porter won't be able to forward incoming connections and requests to your app. To that end, please ensure your app is configured to listen on 0.0.0.0 instead.

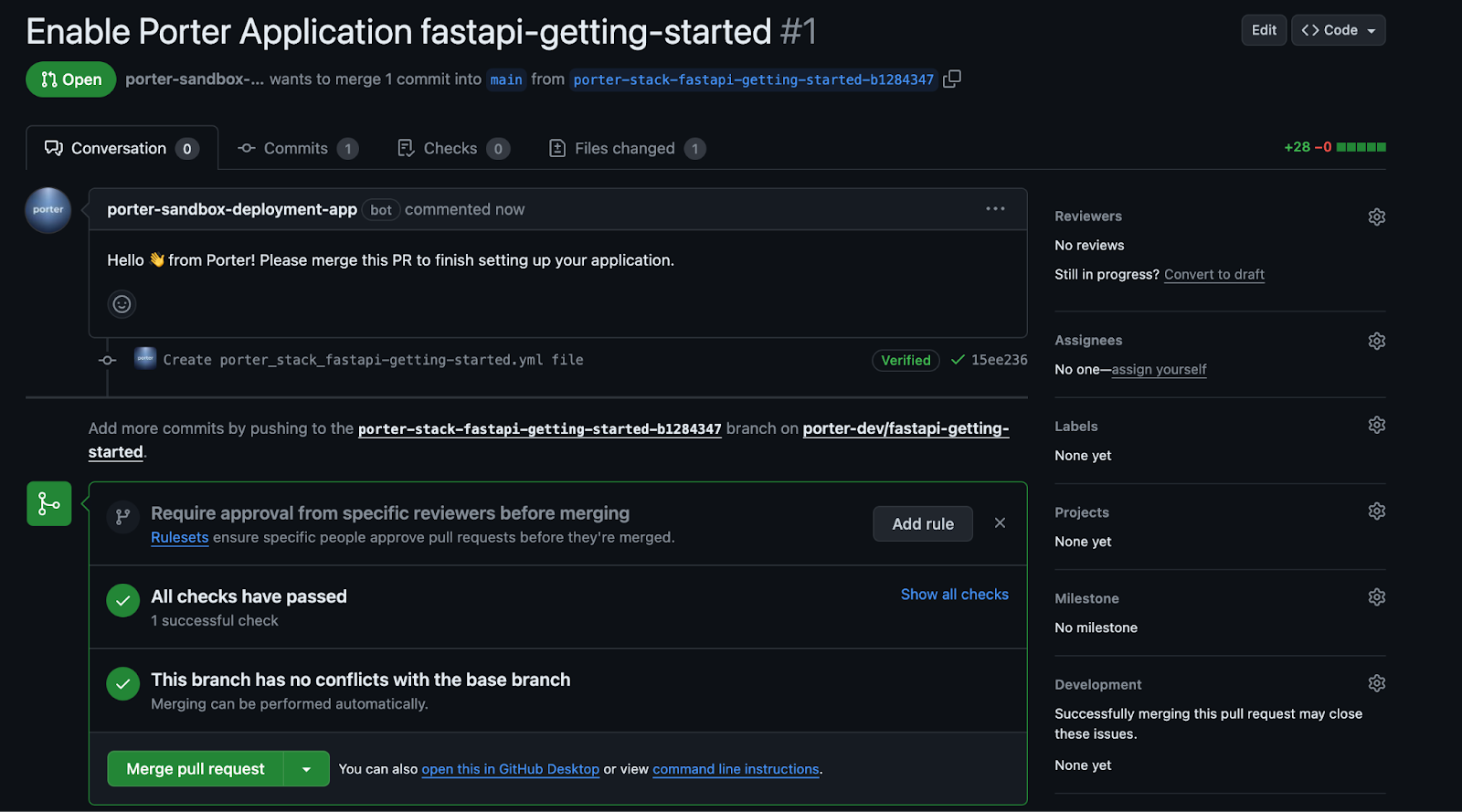

Review and Merge Porter's PR

Hitting Deploy will show you the contents of a GitHub Actions workflow that Porter would use to build and deploy your app:

This Github Action is configured to run every time you push a commit to the branch you specified earlier - when it runs, Porter applies the selected buildpack to your code, builds a final image, and pushes that image to Porter. Selecting Deploy app will allow Porter to open a PR in your repo, adding this workflow file:

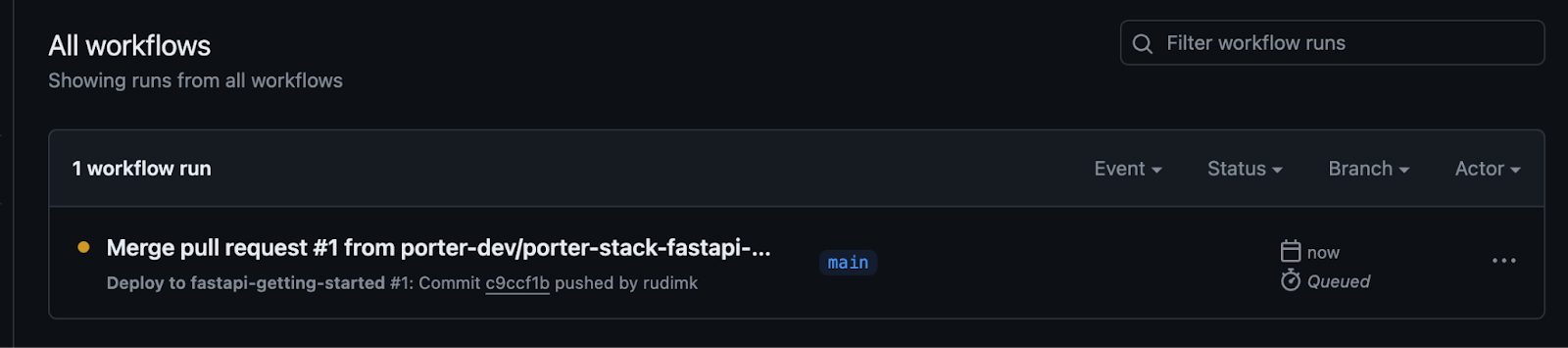

All you need to do is merge this PR, and your build will commence.

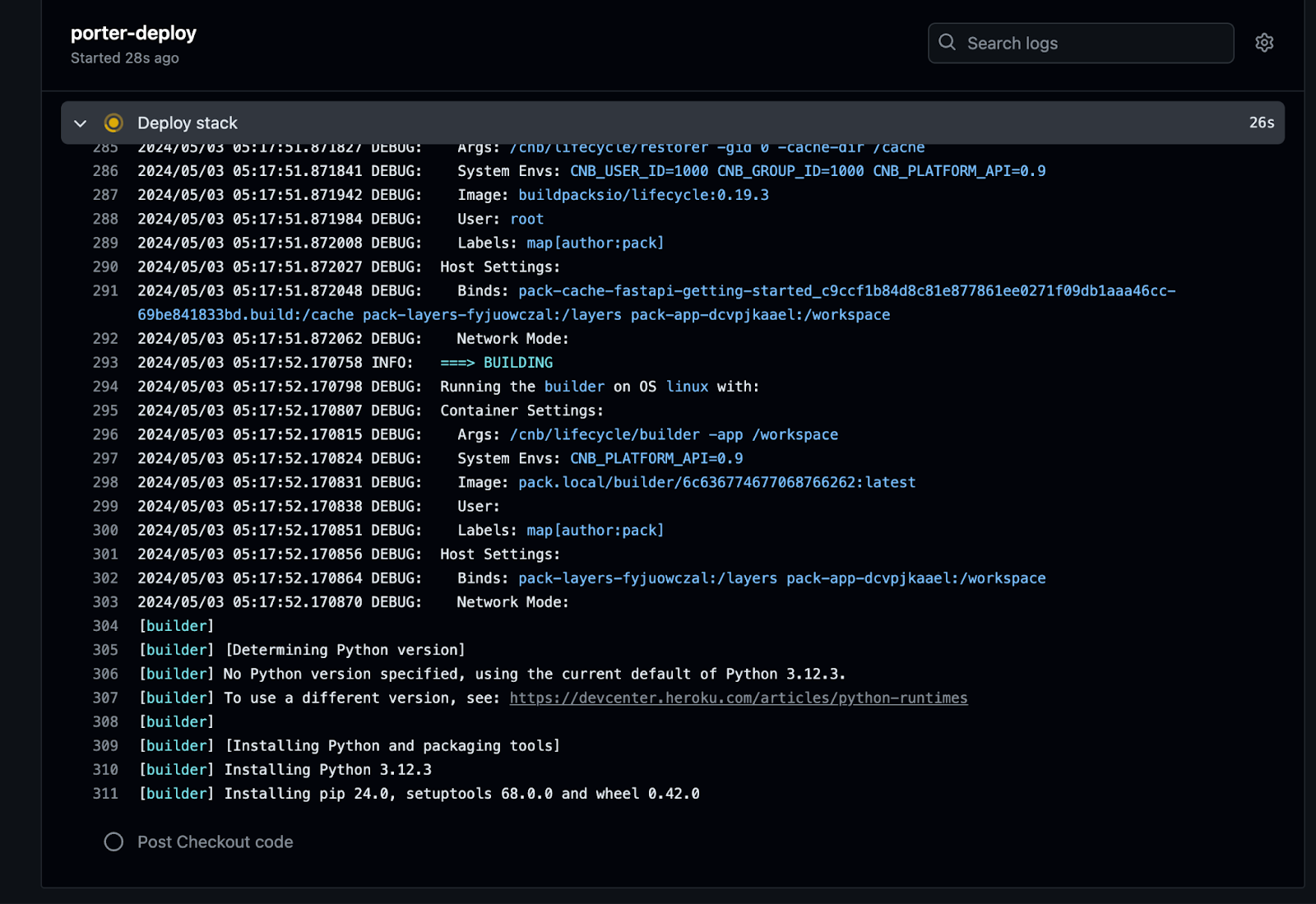

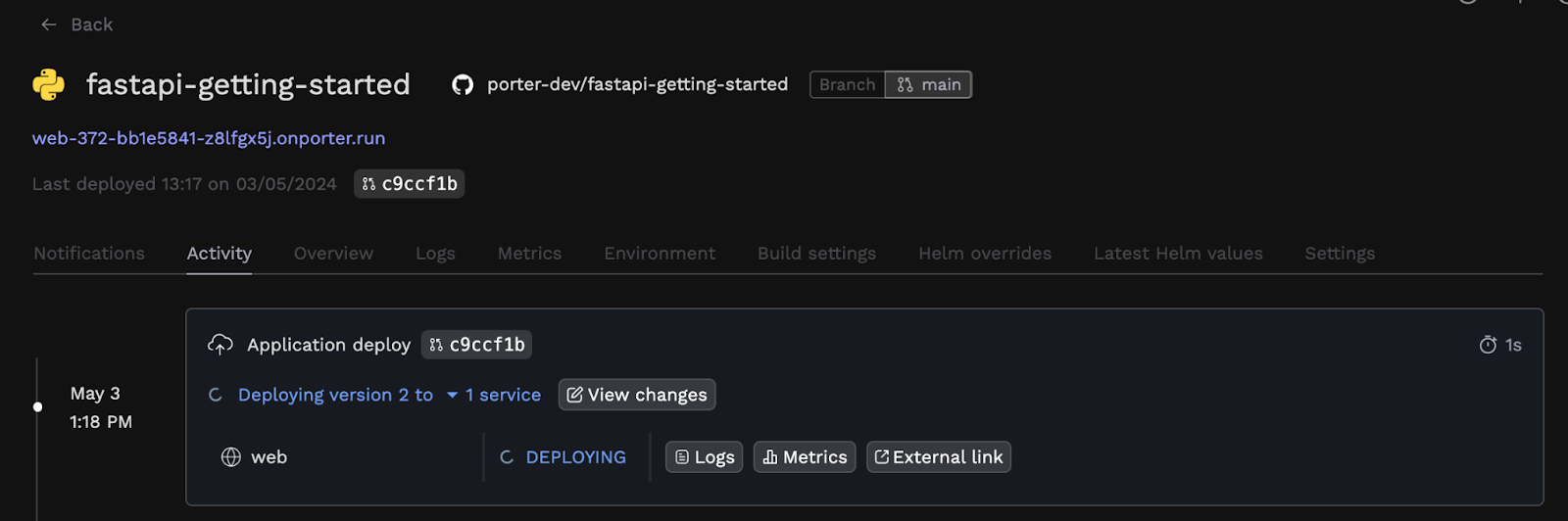

You can also use the Activity tab on the Porter dashboard to see a timeline of your build+deployment going through. Once the build succeeds, you'll also be able to see the deployment in action:

Accessing Your App

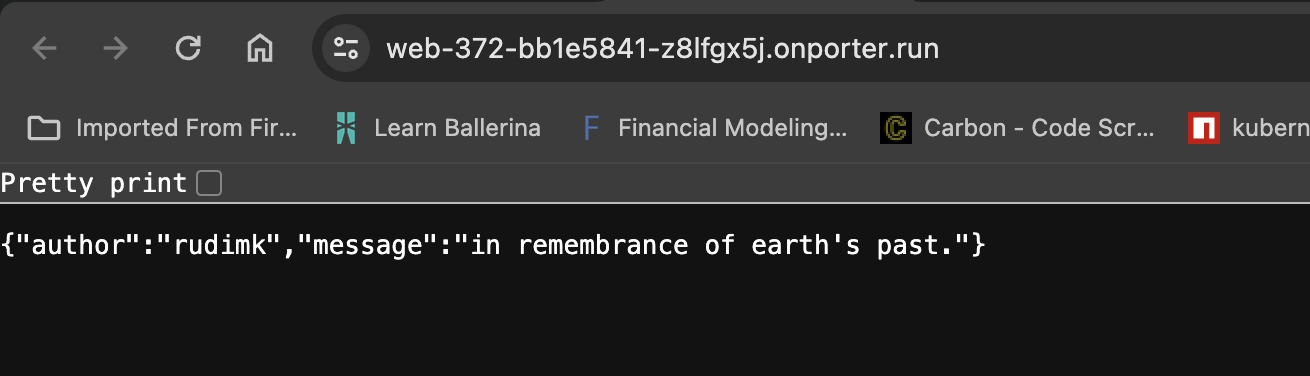

Your FastAPI app's now live on Porter. The Porter-generated unique URL is now visible on the dashboard under your app's name. Let's test it:

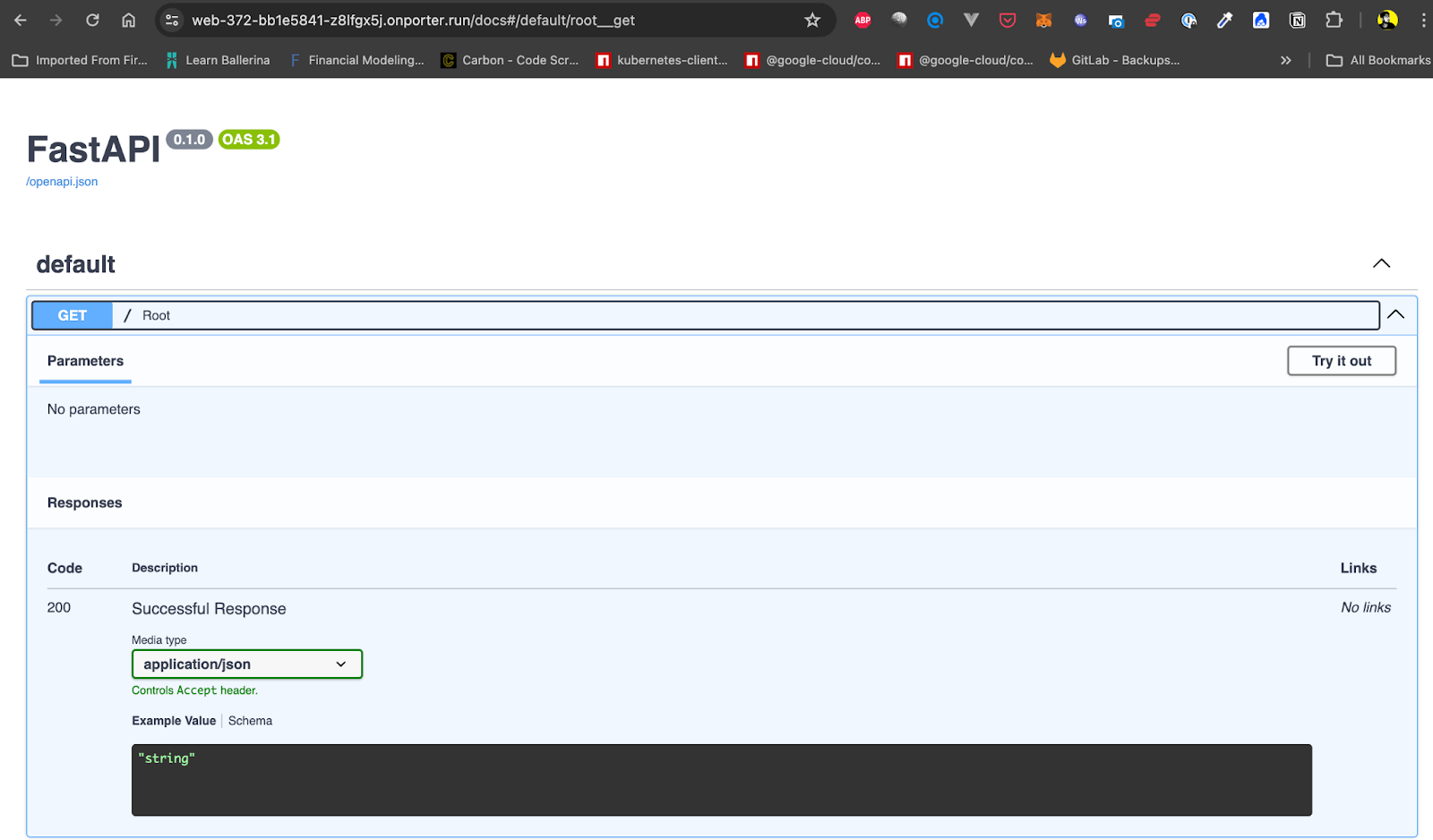

FastAPI applications have native support for the OpenAPI spec - which means every FastAPI app gets an auto-generated OpenAPI documentation page. Let’s check that out:

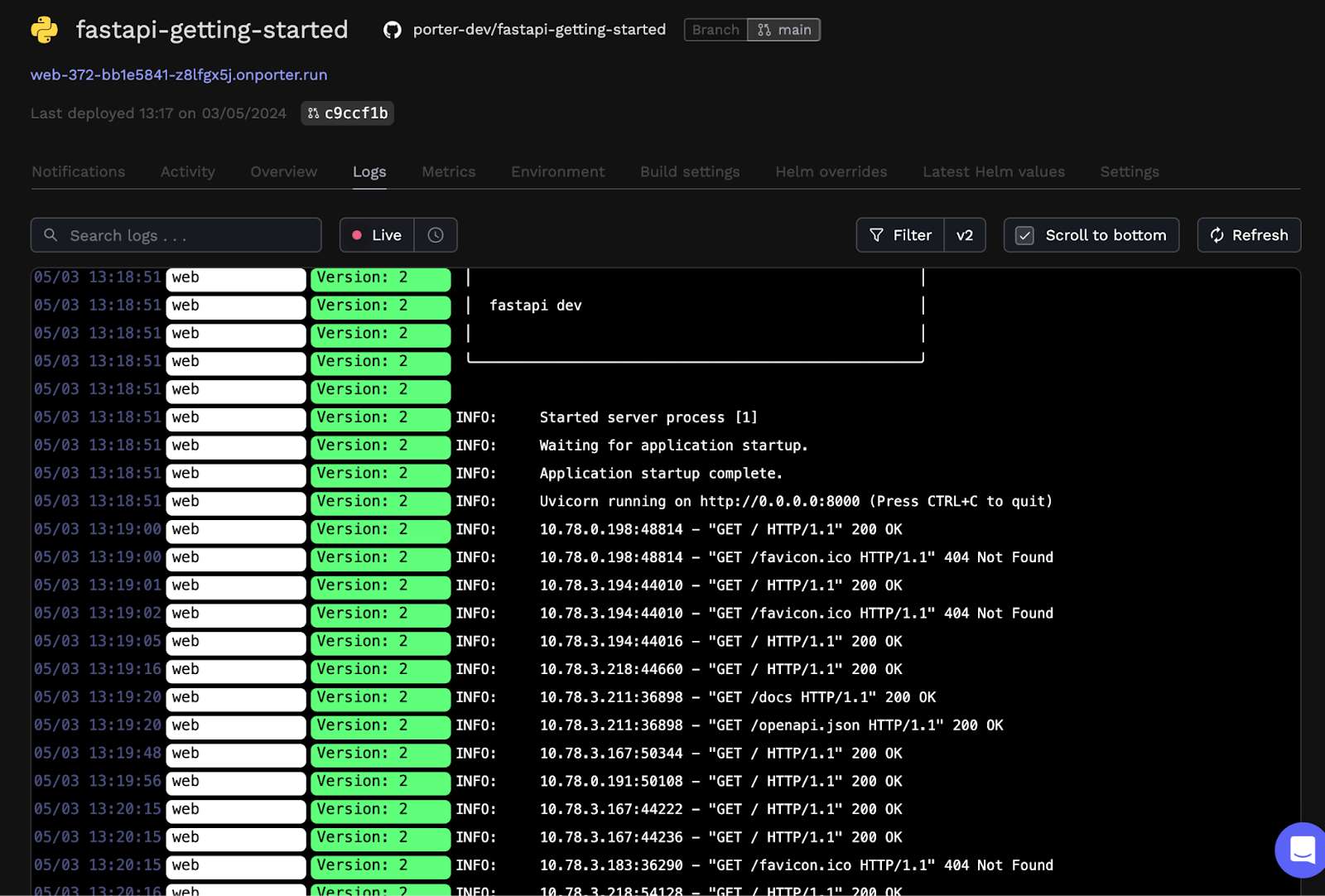

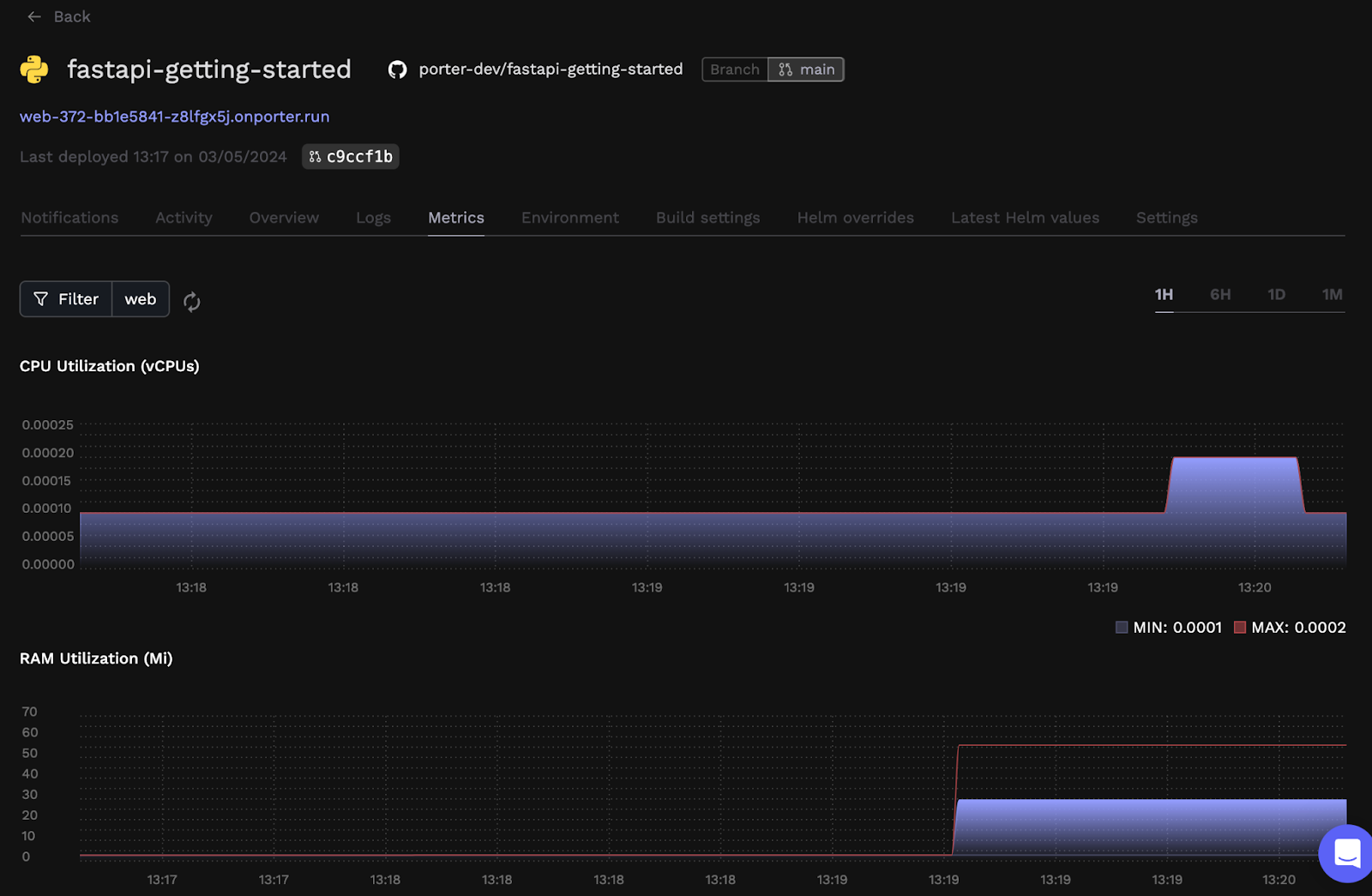

You can also check logging and monitoring in the form of app logs and resource consumption metrics on the dashboard, to see how your app’s doing:

If you'd like more robust logging solution or more powerful monitoring solution and higher retention, you can use a third-party add-on like DataDog to view these metrics.

Exploring Further

We've seen how you can deploy FastAPI apps onto an AWS EC2 instance using Porter, without a manual launch instance step or choosing an Amazon Machine Image, without having to configure instance details or configure security groups, and certainly not having to manage a configuration file and Infrastructure as Code tools like Terraform as you would with ECS managed AWS EC2 instances.

You can also create production data stores on Porter, including a Postgres database like RDS or Aurora. Although it’s simply the AWS-managed Postgres database, such as RDS, being created in its own VPC in your AWS account, Porter will take care of VPC peering and networking conflicts (so IP range and CIDR range conflicts don’t occur) and provide you with an environment group you can inject into your application so your app can talk to that RDS instance. Other than RDS or Aurora, Elasticache can also be deployed via Porter.

Here are a few pointers to help you dive further into configuring/tuning your app:

- Adding your own domain.

- Adding environment variables and groups.

- Scaling your app (Porter takes care of auto scaling).

- Ensuring your app's never offline (we’ll renew and manage the SSL certificate for you).